Reality Composer is an advanced version of augmented reality where a developer can create a scene, object, frameset, import, and export images. A developer can create a 3D animated scene and model for the user interface. They can develop some games and some 3d features with the help of reality composers where developers need to write less code and create modeling in such a way that it will be showing interactive 3D modeling or features. Developers can add a lot of scenes and frames for creating animated and interactive features. A developer can add physics, sequence, behaviour, motion, style, and duration.

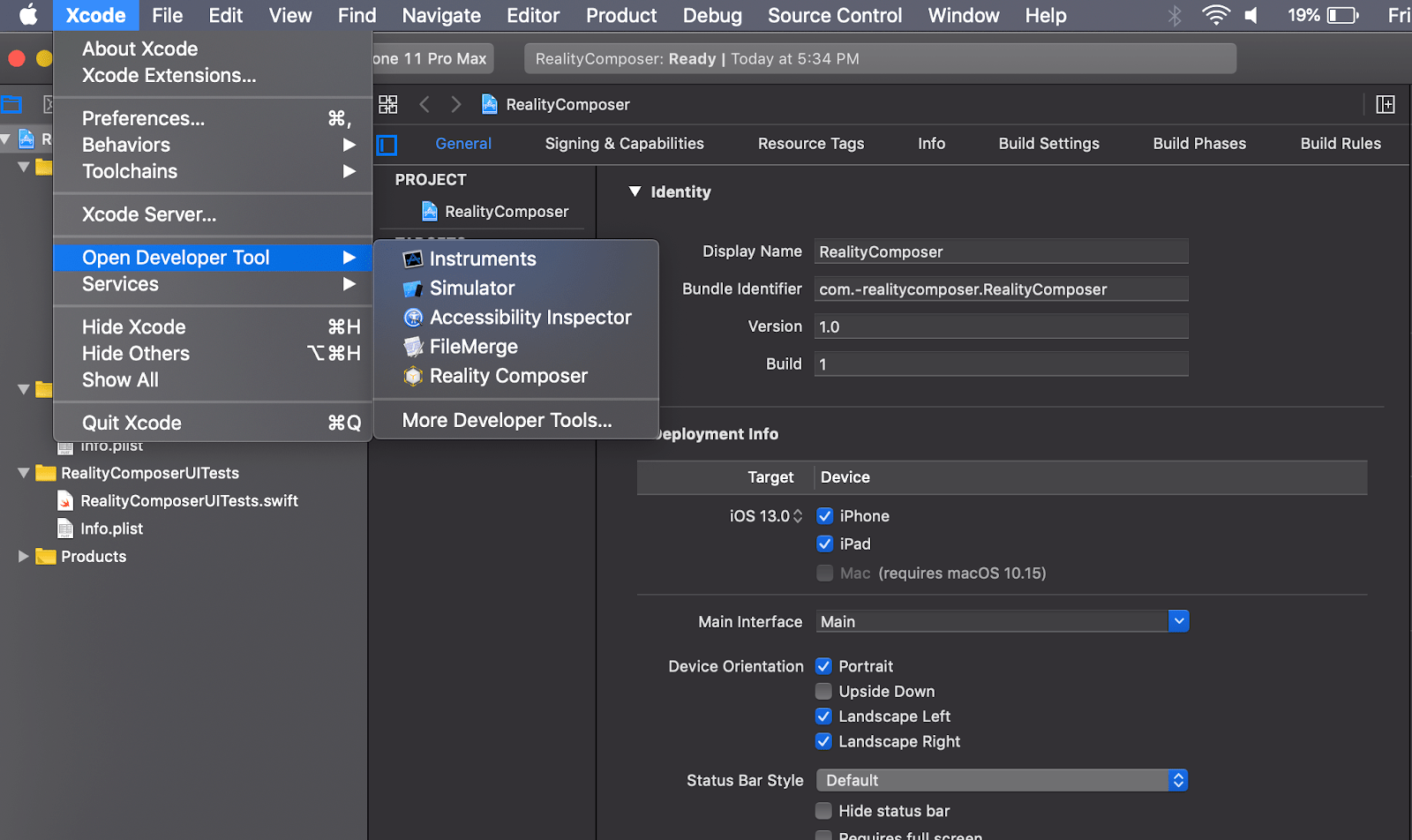

Reality composers available in XCode11 onwards and iPhone Application Developers India can create the app using reality, composers and they can export the file from reality composers. Even they can add an exported file and add in the other project as required. It’s kind of plug and plays in the device.

Features of Reality Composer:-

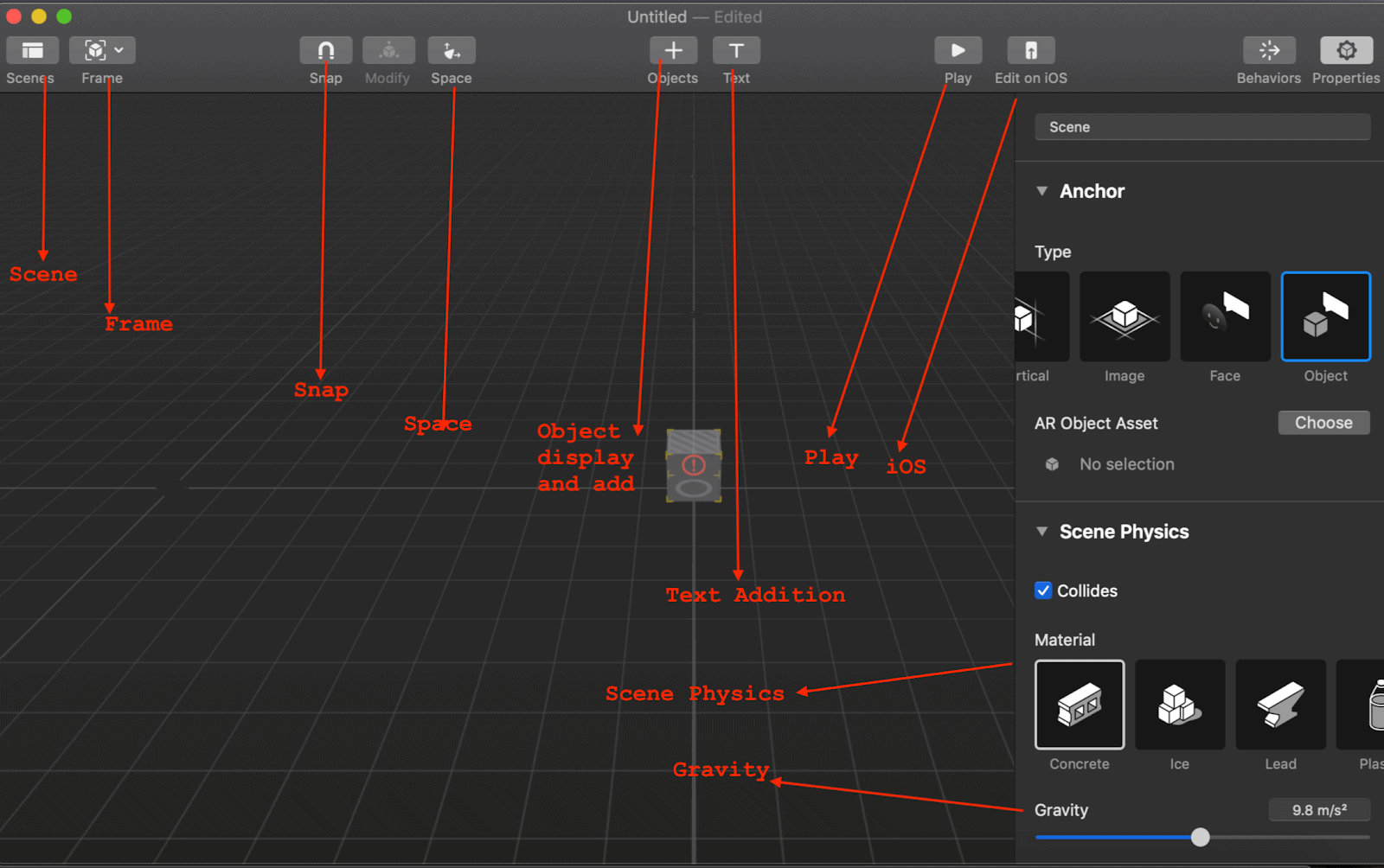

- Scene – Scene can be created with multiple objects and set as one object.

- Frame- Once the scene, created then each frame can be set for transmission from one place to another.

- Space – It represents a camera view where the user can rotate and see the scene in all directions.

- Play button – Users or developers can play the scene or frame.

- Edit on iOS – iOS device can be connected then edited the scene on it.

- Physics – On every scene and frame, the developer can apply the specific physics for different things like rotation, motion, touch, drag, etc.

- Object and images – Object and images can add as desired.

- Performance and Scalability – As a part of reality composer, performance and scalability increased as well as the rendering of UI is so easy.

- The file can be imported and used in the other project as required. It’s a kind of utilization of a single reality composer file in multiple projects.

- Face/Object /Horizontal/Vertical – The Surface area can be vertical or horizontal. The face can add, and some animation effects also integrated.

- Sound – Some sound or Voice can be added to any scene so that it can talk inside the frame or scene.

- MultiConnectivity – Multiuser can be connected, and play the game or particular activity but the developer needs to enable the capability and need to implement the features while developing the apps.

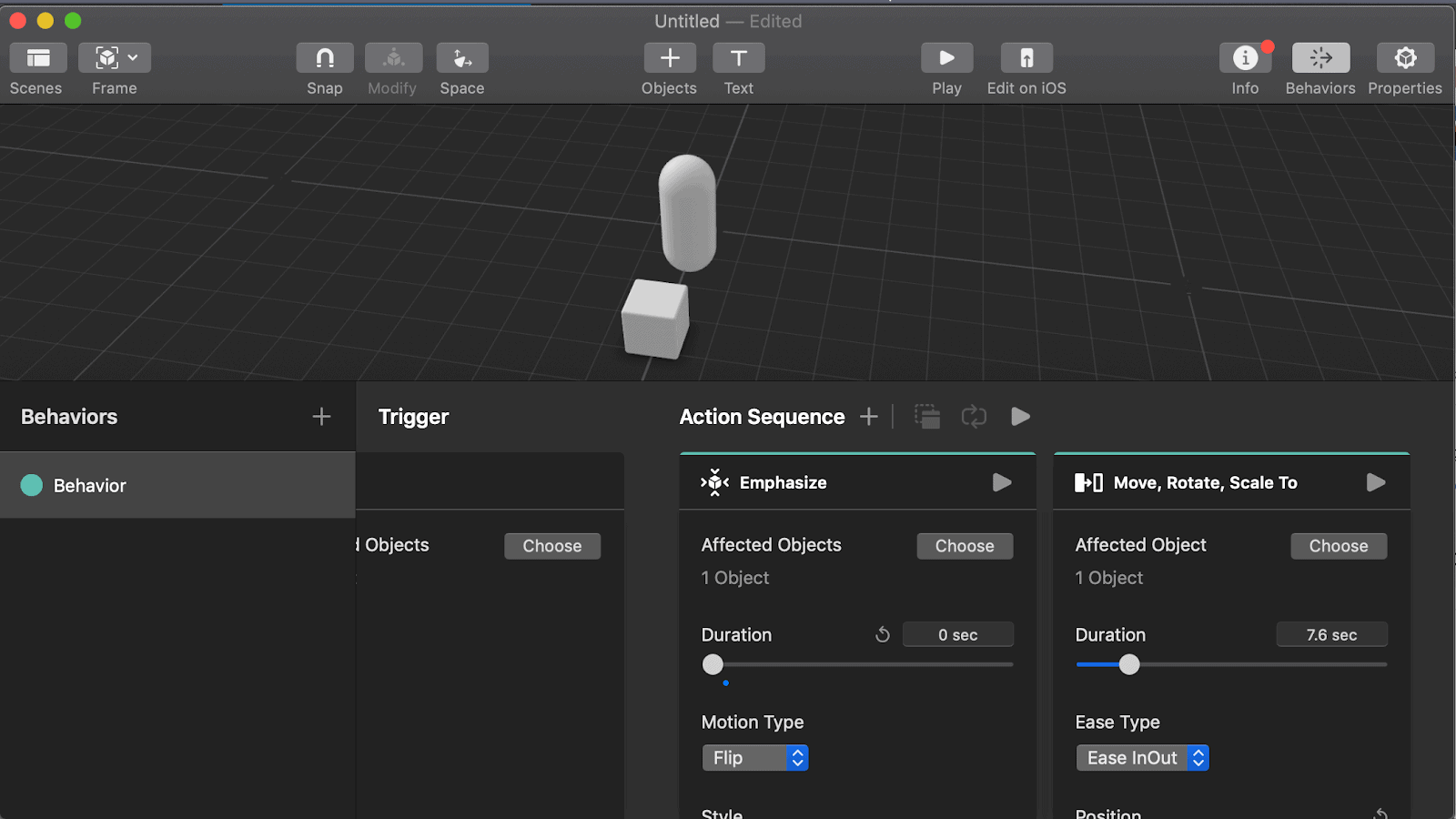

The scene is created with cube and cylinder shape where behavior added for Move, Rotate and scale it and some physics applied in the scene where it can move some distance, rotate, and fell.

//Step 1:- Import all required framework in the controller

import UIKit

import ARKit

import RealityKit

class RealityComposerController: UIViewController {

// Step 2: creating the environment for AR

var realityComposerView = ARView(frame: .zero)

// Step 3: AR view need to be added on the Main View to look into it

override func loadView() {

super.loadView()

view.addSubview(realityComposerView)

}

override func viewDidLoad() {

super.viewDidLoad()

// Step 4: Frame need to assign realityComposerView

realityComposerView.frame = view.frame

//Step 5: Reality Composer file can be accessed by name and run the scene asynchronously,

guard error == nil else {

return

}

if let realityComposerScene = result {

// Step 6: realityComposerScene can be added as anchor in realityComposerView

self.realityComposerView.scene.addAnchor(realityComposerScene)

}

}

}

override func viewWillAppear(_ animated: Bool) {

super.viewWillAppear(animated)

// Step 7: ARWorldTrackingConfiguration can be created and run the app

let configuration = ARWorldTrackingConfiguration()

// Step 8: run the configured AR world and see the effect

realityComposerView.session.run(configuration) } }